There seems to be some layering rhythm to how software capabilities are harnessed to become applications. Every new technology tends to grow these four layers: Capabilities, Interfaces, Frameworks, and Applications.

There does not seem to be a way of skipping or short-cutting around this process. The four layers grow with or without us. We either develop these layers ourselves or they appear without our help. Understanding this rhythm and the cadence of layer emergence could be the difference between flopping around in bewilderment and growing a durable successful business. Here are the beats.

⚡ Capabilities

Every new technological capability usually spends a bit of time in a purgatory of sorts, waiting for its power to become accessible. It needs to traverse the crevasse of understanding: move from being grokable by only a handful of those who built it to some larger audience. Many technologies dwell in this space for a while, trapped in the minds of inventors or in the hallways of laboratories. I might be stating the obvious here: the process of inventing something is not enough for it to be adopted.

I will use large language models as the first example in this story, but if you look closely, most technological advances follow this same rhythm. The transformer paper and the general capability for building models has been around for a while, but until last year, it was mostly contained to the few folks who needed to understand the subject matter deeply.

🔌 Interfaces

The breakthrough usually comes in the form of a new layer that emerges on top of the capability: the Interfaces layer. This is typically what we see as the beginning of the technology adoption growth curve. The Interfaces layer can be literally the API for the technology or any other form of simplifying contract that enables more people to start using the technology.

The Interfaces layer serves as the democratizer of the Capabilities layer: what was previously only accessible to the select few – be that due to the complex nature of the technology, capital investment costs, or some other barrier – is now accessible to a much larger group. This new audience is likely still fractionally small compared to all the world’s population, but it must be numerous enough for the tinkering dynamic to emerge.

This tinkering dynamic is key to the success of the technology. Tinkerers aren’t super-familiar with how the technology works. They don’t have any of the deep knowledge or awareness of its limits. This gives them a tremendous advantage over the inventors of the technology – they aren’t trapped by the preconceived notions of what this technology is about. Tinkers tinker. Operating at the Interfaces layer, they just try to apply the tech in this way and that and see what happens.

Many research and development organizations make a crucial mistake by presuming that tinkering is something that a small group of experts can do. This usually backfires, because for this phase of the process to play out successfully, we need two ingredients: 1) folks who have their own ideas about what they might do with the capabilities and 2) a large enough quantity of these folks to actually start introducing surprising new possibilities.

Because of this element of surprise, tinkering is a fundamentally unpredictable activity. This is why R&D teams tend to not engage in it. Especially in cases when the societal impact of technology is unclear, there could be a lot of downside hidden in this step.

In the case of large language models, OpenAI and StabilityAI were the ones who decided that this risk was worth it. By providing a simple API to its models, OpenAI significantly lowered the barrier to accessing the LLM capabilities. Similarly, by making their Stable Diffusion model easily accessible, StabilityAI ushered a new era of tinkering with multimodal models. They were the first to offer the large language models Interfaces layer.

Because it’s born to facilitate the tinkering dynamic, the Interfaces layer tends to be opinionated in a very specific way. It is concerned with reducing the burden of barriers to entry. Just like any layer, it does so by eliding details: to simplify, some knobs and sliders become no longer accessible to the consumer of the interface.

If the usage of the Interfaces layer starts to grow, this indicates that the underlying Capabilities layer appears to have some inherent value, and there is a desire to capture as much of this value as possible.

🔋Frameworks

This is the point at which a new layer begins to show up. This third layer, the Frameworks, focuses on utility. This layer asks: how might we utilize underlying the Interfaces layer in more effective ways, and make it even more accessible to an even broader audience?

Utility might mean different things in different situations: in some, the value of rapidly producing something that works is the most important thing. In others, it is the overall performance or reliability that matters most. Most often, it’s some mix of both and other factors.

Whatever it is, the search for maximizing utility results in development of frameworks, libraries, tools, and services that consume the Interfaces layer. Because there are many definitions of utility and many possible ways to achieve it, the Frameworks layer tends to be the most opinionated of the stack.

In my experience, the diversity of opinion introduced in the Frameworks layer depends on two factors: the inherent value of the capability and the own opinion of the Interfaces layer.

The first factor is fairly straightforward: the more valuable the capability, the more likely there will be a wealth of opinions that will grow in the Framework layer.

The second factor is more nuanced. When the Interfaces layer is introduced, its authors build it by applying their own mental model of how the capability will be used via their interface. Since there aren’t actual users of the layer yet, it is at best a bunch of guesses. Then, the process of tinkering puts these guesses to the test. Surprising new uses are discovered, and the broadly adopted mental models of the consumers of the interface usually differ from the original guesses.

This difference becomes the opinion of the Interfaces layer. The larger this difference, the more effort the Frameworks layer will have to put into compensating for this difference – and thus, create more opportunities for even more diversity of opinion.

An illustration for how this plays out is the abundance of the Web frameworks. Since the Web browser started out as the document-viewing application, it still has all of those early guesses firmly entrenched. Indeed, the main API for web development is called the Document Object Model. We all have moved on from this notion, asking our browsers to help us conduct business, entertain us, and work with us in many more ways than the original designers of this API envisioned. Hence, the never-ending stream of new Web frameworks, each trying yet another way to close this mental model gap.

It is also important to call out a paradox that develops as a result of the interaction between the Frameworks and the Interfaces layer. The Frameworks layer appears to simultaneously apply two conflicting pressures to the Interfaces layer below: to change and to stay the same.

On one hand, it is very tempting for the Interfaces layer maintainers to change it, now that their initial guesses were tested. And indeed, when talking to the Frameworks layers developers, the Interfaces layer maintainers will often hear requests for change.

At the same time, changing means breaking the existing contract, which creates all kinds of trouble for the Frameworks layer – these kinds of changes are usually a lot of work (see my deprecation two-step write-up from a while back) and take a long time.

The typical state for a viable Interfaces layer is that it is mired in a slow slog of constant change whose pace feels glacial from the outside. Once the Frameworks layer emerges, the API layer becomes increasingly more challenging to evolve. For those of you currently in this slog, wear it as a badge of honor: it is a strong signal that you’ve made something incredibly successful.

The Frameworks layer becomes the de-facto place where the best practices and patterns of applying the capability are developed and stored. This is why it is only after a decent Frameworks layer appears do we start seeing robust Applications actually utilizing the capability at the bottom of the stack.

📱Applications

The Applications layer tops our four-stack of layers. This layer is where the technology finally faces its users – the consumers of the technological capability. These consumers might be the end users who aren’t at all technology-savvy, or they could be just another group of developers who are relieved to not have to think about how our particular bit of technology works on the inside.

The pressure toward maximizing utility develops at this layer. Consumer-grade software is serious business, and it often takes all available capacity to just stay in the game. While introducing new capabilities could be an appealing method to expand this business, at this layer, we seek the most efficient way possible to do so. The whole reason the Frameworks layer exists is to unlock this efficiency – and to further scale the availability of the technology.

This highlights another common pitfall of a research organization is to try to ram a brand new capability right into an application, without thinking about the Interfaces and Frameworks layers between them. This usually looks like a collaboration between the team that builds at the Capabilities layer and the team that builds at the Application layer. It is usually a sordid mess. Even if the collaboration nominally succeeds, neither participant is happy in the end. The Capability layer folks feel like they’ve got the most narrow and unimaginative implementation of their big idea. The Application folks are upset because now they have a weird one-off turd in their codebase.

👏 All together now

Getting technology adopted requires cultivating all four layers. To connect Capabilities to Applications, we first need the Interfaces layer that undergoes a significant amount of tinkering, with a non-trivial amount of use case exploration that helps map out the potential space of solutions that the new technology can actually solve. Then, we need the Frameworks layer to capture and embody the practices and patterns that trace the shortest paths across the explored space.

This is exactly what is playing out with the large language models. While ChatGPT is getting all the attention, the actual interesting work is happening at the Frameworks layer that sits on top of the large language model Interfaces layer: the OpenAI, Anthropic, and PaLM APIs.

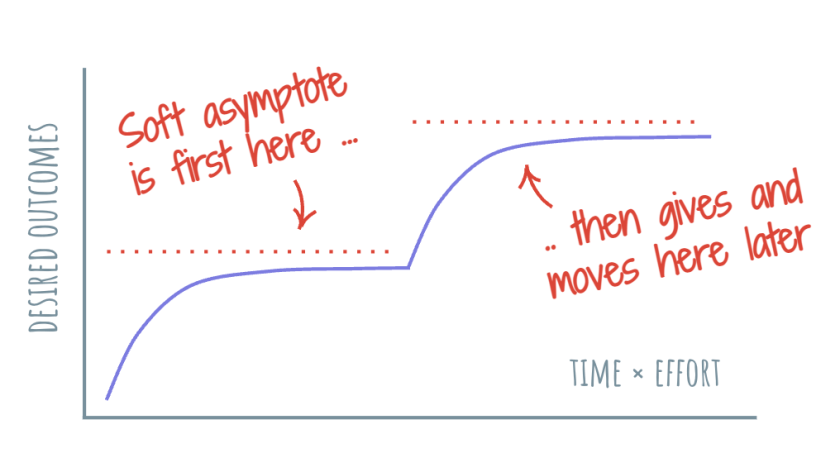

The all-too-common trough of disillusionment that many technological innovations encounter can be described as the period of time between the Capability layer becoming available and the Interfaces and Frameworks layers filling in to support the Applications layer.

For instance, if you want to make better guesses about the future of the most recent AI spring, pay attention to what happens with projects like LangChain, AutoGPT, and other tinkering adventures – they are the ones accumulating the recipes and practices that will form the foundation of the Frameworks layer. They will be the ones defining the shape of the Applications layer.

Here’s the advice I would give to any team developing a nascent technology:

- Once the Capabilities layer exists, immediately focus on developing the Interfaces layer. For example, if you have a cool new way to connect devices wirelessly, offer an API for it.

- Do make sure that your Interfaces layer encourages tinkering. Make the API as simple as possible, but still powerful enough to be interesting. Invest into capping the downside (misuse, abuse, etc.). For example, start with an invitation-only or rate-limited API.

- Avoid the comforting idea that just playing with the Interfaces layer within your team or organization constitutes tinkering. Seek out a diverse group of tinkerers. Example: opt for a public preview program rather than an internal-only hackathon.

- Prepare for the long slog of evolving the Interfaces layer. Consider maintaining the Interfaces layer as a permanent investment. Grow expertise on how to maintain the layer effectively.

- Once the Interfaces layer usage starts growing, watch for the emergence of the Frameworks layer. Seed it with your own patterns and frameworks, but expect them not to take root. There will be other great tool or library ideas that you didn’t come up with. Give them all love.

- Do invest in growing a healthy Frameworks layer. If possible, assume the role of the Frameworks layer facilitator and patron. Garden this layer and support those who are doing truly interesting things. Weed out grift and adversarial players. At the very least, be very familiar with the Frameworks landscape. As I mentioned before, this layer defines the shape of Applications to come.

- Do build applications that utilize the technology, but only to learn more about the Frameworks layer. Use these insights to guide changes in Interfaces and Capabilities layer.

- Be patient. The key to finding valuable opportunities is in being present when these opportunities come up – and being the most prepared to pursue these opportunities.

If you orient your work around these four layers, you might find that the rhythm of the pattern begins to work for you, rather than against you.