- Solved, solvable, unsolvable

I have been noodling on a decision-making framework, and I am hoping to start writing things down in a sequence, Jank-in-teams style. You’ve probably seen glimpses of this thinking process in my posts over the last year or so, but now I am hoping to put it all together into one story across several short essays. I don’t have a name for it yet.

The first step in this adventure is quite ambitious. I would like to offer a replacement for the Cynefin framework. Dear Cynefin, you’ve been one of the highest-value lenses I’ve learned. I’ve gleaned so many insights from you, and from describing you to my friends and colleagues. I am not leaving you behind. I am building on top of your wisdom.

This new purported framework is no longer a two-by-two. Instead, it starts out as a layer cake of problem classes. Let us begin the story with their definitions.

At the top is the class of solved problems. Solved problems are very similar to those residing in Obvious space in Cynefin: the problems that we no longer consider problems per se, since there’s a reliable, well-established solution to them. Interestingly, the solution does not have to be deeply understood to be a solved problem. Hammering things became a solved problem way before the physics that make a hammer useful were discerned.

Then, there is a class of solvable problems. Cynefin’s Complicated space is a reasonable match for this class of problems. As the name implies, solvable problems don’t yet have solutions, but we have a pretty good idea on how they will look when solved. From puzzles to software releases, solvable problems are all around us, and as a civilization, we’ve amassed a wealth of approaches on how to solve them.

The final class of problems loosely corresponds to Complex space in Cynefin. These are the unsolvable problems. Unsolvable problems are just that: they have no evident solution. At the core of all unsolvable problems is a curious adaptive paradox: if the problem keeps adapting to your attempts at solving it, the solution will continue being just out of reach. I wonder if this is why games like chess usually have a limited number of pieces and a clear victory condition. If the opponents are matched enough, there must be some limit to make this potentially infinite game finite. Another way of thinking about unsolvable problems is that they are trying to solve you just as much as you’re trying to solve them.

You may notice that there is no corresponding match for Cynefin’s Chaotic space in this list. When describing Chaotic space, I’ve long recognized the presence of a clear emotional marker (disaster! emergency!) that seemed a bit out of place to how I usually described other spaces. So, in this framework, I decided to make it orthogonal to the class of the problem. But let’s save this bit for later.

The interesting thing about all three classes is that they are a spectrum that I loosely grouped into three bands. Obviously, I tend to think in threes, so it’s nice and comfy for me to see the spectrum in such a way. But more importantly, each class appears to have a different set of methods and practices associated with it. You may already know this from our studies of Cynefin. Just think of how the effective approaches in Complex space differ from those in Complicated, and how both are different from those in Obvious.

Still, it is also pretty clear that the transition between these classes is fuzzy. As my child self was learning to tie shoes, the problem slowly traversed across the spectrum. First, the tricky bendy laces that kept trying to escape my grasp (oh noes, unsolvable!?) became more and more familiar, while tying the crisp Bunny Ears knot, despite being clearly and patiently explained, was a challenge (wait, solvable!). Then, this challenge faded, and tying shoes became an unbreakable habit (yay, solved). This journey across the problem class layers is a significant part of the framework, and something I want to talk about next.

- Mental models

I use the term “mental models” a lot, and so I figured – hey, maybe it’s time to do some semantic disambiguation and write down everything I learned so far about them?

When I say “mental model,” I don’t just mean a clean abstraction of “how a car works” or “our strategy” – even though these are indeed examples of mental models. Instead, I expand the definition, imagining something squishy and organic and rather hard to separate from our own selves. I tend to believe that our entire human experience exists as a massive interconnected network of mental models. As I mentioned before, my guess is that our brains are predictive devices. Without our awareness, they create and maintain that massive network of models. This network is then used to generate predictions about the environment around us. Some of these models indeed describe how cars work, but others also help me find my way in a dark room, solve a math problem, or prompt the name of emotion I am feeling in a given moment. Mental models are everything.

Our memories are manifestations of mental models. The difference between remembering self and experiencing self is in the process of incorporating our experiences into our mental models. What we remember is not our experiences. Instead, we recall the reference points of the environment in that vast network of models – and then we relive the moment within that network. Our memories are playing back a story with the setting and the cast of characters defined by our mental model.

This playback experience is not always like that black-and-white flashback moment in a movie. Sometimes it shows up as the annoying earworm song, or sweat on our palms in anticipation of a stressful moment, or just a sense of intuition. Mental models are diverse. They aren’t always visual or clothed in rational thought, or even conscious. They usually include sensory experiences, but most definitely, they contain feelings. Probably more accurately, feelings are how our mental models communicate. A “gut feeling” is a mental model at work. Feelings tell us whether the prediction produced by a mental model is positive (feels good) or negative (feel bad), so that’s the most important information to be encapsulated in the model. Sometimes these feelings are so nuanced and light that we don’t even recognize them as feelings – “I like this idea!” or “Hmm, this is weird, I am not sure I buy this” – and sometimes the feelings are touching-the-hot-plate visceral. Rational thinking is us learning how to spelunk the network of mental models to understand why we are feeling what we’re feeling.

One easy way to think of this network model as of a massive, parallel computer that is always running in the background, where we’re asleep or awake. There are always predictions being made and evaluated. Unlike computers, our models aren’t set structurally. As we grow up, our models evolve, not just by getting better, but also through the means by which models are created and organized. We can see this plainly by examining our memories. I may remember a painful experience from the past as a “terrible thing that happened to me” at first, and then, after living for a while, that “terrible thing” somehow transforms into “a profound learning moment.” How did that happen? The mental model didn’t sit still. The bits and pieces that comprised the context of the past experience have grown along with me, and shifted how I see my past experience.

We can also see that if my memory hasn’t changed over time, it’s probably worth examining. Large connected networks are notoriously prone to clustering. The seemingly kooky idea of the “whole self” is probably rooted in this notion that mental models are in need of gardening and deliberate examination. When I react to something in a seemingly childish way, it is not a stretch to consider: maybe the model I was relying on in that moment indeed remained unexamined since childhood? And if so, there’s probably a cluster within my network of mental models that still operates on the environment drawn by a three-year old’s crayon. This examination is a never-ending process. Our models are always inconsistent, sometimes a little, and sometimes a lot.

When I see a leader ungraciously lose their cool in a public setting, the thought that comes to mind is not whether their behavior is “right” or “wrong,” but rather that I’ve just been witness to a usually hidden, internal struggle of inconsistent mental models.

Our models never get simpler. I may discover a framing that opens up a new space in the previously constrained space, allowing me to find new perspectives. Others around us are at first simple placeholders in our models, eventually growing into complex models themselves, models that recurse, including complexity of how these others think of us and even perhaps how they might think we think of them (nested models!) Over time, the network of models grows ever-more complex and interconnected. At the same time, our models seamlessly change their dimensionality. Fallback fluidly influences the nuance of the model complexity, and thus – the predictions that come up. Fallback is a focusing function. If my body believes I am in crisis, it will rapidly flatten the model, turning a nuanced situation into a simple “just punch this guy in the face!” directive — often without me realizing what happened.

I am guessing that every organism has a kind of a mental model network within them. Even the simplest single-cell organisms contract when poked, which indicates that there’s a — very primitive, but still — a predictive model of environment somewhere on the inside. It is somewhat of a miracle to see that humans have learned to share mental models with such efficiency. For us, sharing the mental models is no longer limited to a few behaviors. We can speak, write, sing, and dance stories. Stories are our ways to connect with each other and share our models, extending already-complex networks way beyond the boundary of an individual mind. When we say “a story went viral,” we’re describing the awe-inspiring speed at which a mental model can be shared. Astoundingly, we have also learned to crystallize shareable mental models through this phenomenon we call technology. Because that’s what all of our numerous aids and tools and fancy gadgets are: the embodiments of our mental models.

This is what I mean when I say “mental models.” It may seem a bit useless to take such a broad view. After all, if I am just talking about leadership, engineering, or decision-making, it’s very tempting to stick to some narrower definition. Yet at the same time, it is usually the squishy bits of the model where the trickiest parts of making decisions, leading, or engineering reside. Ignoring them just feels like… well, an incomplete mental model.

- A problem

To get a more solid grounding of the newly born decision-making framework, we need to understand what a problem is. Let’s begin with a definition. A problem is an imposition of our intention on a phenomenon.

I touched on this notion of intention a bit in one of the Jank in Teams pieces, but here’s a recap. Our mental models generate a massive array of predictions, and it appears that we prefer some of these predictions to others. The union of these predictions manifests as our intention. When we observe any phenomenon, we can’t help it but impose our intention on it. The less the predicted future state of the phenomenon aligns with our intention, the more of a problem it is.

For example, suppose I am growing a small garden in my backyard. I love plants and they are amazing, but if they aren’t the ones that I intended to grow on my plot, they are a problem. Similarly, If I am shown a video of a cute bunny eating a carrot, I would not see the events, documented by the videographer as a problem. Unless, that is, I am told that this video was just recorded in my garden. At this very instant, the fluffy animal becomes a harvest-destroying pest – and a problem.

I like this definition because it places problems in the realm of subjectivity. To become problems, phenomena need to be subject to a particular perspective. A phenomenon is a problem only if we believe it is a problem. Even world-scale, cataclysmic events like climate change are only a problem if our preferred future includes a thriving humanity and life as we know it. I also like how it incorporates intention and thus, a desire to impose our will on a phenomenon. When we decide that something is problematic, we reveal our preferences to its future state.

Framing problems as a byproduct of intentionality also allows us to play with the properties of intention to see how they shift the nature of a problem. Looking at the discussion of the definition above, I can name a couple of such properties: the strength of intention and the degree of alignment. Let’s draw – you know it! – a 2×2, a tool to represent the continuous spectrum of these property values as their extremities. The vertical axis will be the degree of alignment between the current state of the phenomenon and our intention imposed on it. The horizontal axis will represent the strength of our intention.

In the top-left quadrant, we’re facing a disaster. A combination of strong intention and a poor alignment means that we view the phenomenon as something pretty terrible and looming large. The presence of a strong intention tends to have this quality. The more important it is for a phenomenon to be in a certain state, the more urgent and pressing the problem will feel for us. Another way to think of strength of intention is how existential for us is the fulfillment of this intention. If I need my garden to survive through the winter, it being overrun by a horde of ravenous bunnies will definitely fit into this quadrant.

Moving clockwise, the alignment is still poor, but our intention is not that strong. This quadrant is a mess. This is where we definitely see that things could be better, but we keep not finding time on our schedule to deal with the situation. Problems in this quadrant can still feel large in scope, indicating that the predicted future state of the phenomenon is far apart from the state we intend it to have. It’s just that we don’t experience the same existential dread when we survey them. Using that same garden as an example, I might not like how I planted the carrots in meandering, halting curves, but that would be a mess rather than a disaster.

The bottom-right quadrant is full of quirks. The degree of intention misalignment is small, and the intention is weak. Quirks aren’t necessarily problems. They can even be a source of delightful reflection, like that one carrot that seems to stick out of the row, seemingly trying to escape its kin.

The final quadrant is the bread and butter of software engineers. The phenomenon’s state is nearly aligned with our intention, but the strength of our intention makes even a tiny misalignment a problem. This is the bug quadrant. Fixing bugs is a methodical process of addressing relatively small, but important problems within our code. After all, if the bug is large enough, it is no longer a bug, but a problem from the quadrant above – a disaster.

- Understanding

Once the notion of a problem is sufficiently semantically disambiguated, we can proceed toward the next marker on our map: the concept of “understanding”. Ambitious, right?! There will be two definitions in this piece, one building on another. I’ll start with the first one. Understanding of a phenomenon refers to our ability to construct a mental model of it, and that model makes reasonably accurate predictions about this phenomenon’s future state.

This definition places the presence of a mental model at the core of understanding. When we say that we understand something, we are conveying that a) we have a mental model of this something and b) more often than not, this model behaves like that actual something. The more accurate our predictions, the better we understand a phenomenon. At the extreme end of the spectrum of understanding is a model that is so good at making predictions that we literally don’t need to ever observe the phenomenon itself – the model acts as a perfect substitute. Though this particular scenario is likely impossible, there are many things around us that come pretty close. I don’t have to look at the stairs when I am climbing them. If I want to scratch my chin, I don’t need to carefully examine it: I just do it, sometimes automatically. In these situations, the rate at which the model makes prediction errors is low enough for us to assume that we understand the phenomenon. Of course, that makes rare prediction errors much more surprising, casting doubt on such assumptions.

Conversely, when we keep failing to predict what’s going to happen next with a thing we’re observing, we say that we don’t understand it. Our mental model of it is too incorrect, incomplete, or both, producing a high prediction error rate. Another related notion here is “legibility”. When we say something is legible, we tend to imply that we find it understandable, and vice versa, when we say that something is illegible, our confidence in understanding it is low. Think of legibility as a first-order derivative of understanding: it is our prediction of whether we can construct a low-prediction error rate model of the phenomenon.

Rolling along with this idea of predicting predictability of a mental model, I’d like to bring another definition to this story and define “understanding of a problem.” I will do this in a way that may seem like sleight of hand, but my hope here is to both provide a usable definition and illuminate a tiny bit more of the abyssal depths of the nature of understanding. Here goes. Understanding a problem is understanding a phenomenon which includes us, the phenomenon that is the subject of our intention, and our intention imposed on it. See what I did there? It’s a definition turducken. Instead of producing something uniquely artisanal and hand-crafted, I just took my previous definition and stuffed it with new parameters! Worse yet, these parameters are just components of my previous definition of a problem: me, my intention, and the thing on which I impose that intention. However, I believe this is, as we say in software engineering, “working as intended”.

First, the definition provides a useful mental model of how we understand problems. We need to understand the problematic phenomenon and we need to understand the different ways we can influence it to make it less problematic. If I want a tennis ball to smack into a tree in my backyard to scare away the bunny eating my carrots — obviously I don’t want to harm the cute bunny! — I need to know how I can make that happen. Just like we’ve seen with legibility, understanding of a problem is a first-order derivative of understanding of a phenomenon. It’s the understanding of agency: how do I and the darned thing interact and what are my options for shifting it toward some future state that I intend for it?

Second, this definition exposes something quite interesting: once we see something as a problem, we entangle ourselves with it. If something is a problem, our understanding of it always includes understanding – a predictive model! – of ourselves. This model doesn’t have to be complete. For example, to throw a ball at a tree, I don’t need to have a deep understanding of my inner psyche. I just need to understand how my arm throws a ball, as well as how far and how accurately I can throw it.

Third, we can see that, under the influence of our intention, phenomena appear to form systems: interlinked clusters of mental models that are entangled with each other. And it’s usually the mental models of ourselves at the center of entanglements, holding all of these clusters together and forming the network of the mental models that I mentioned a few articles back. This might not seem profound to you, but it was a pretty revelatory learning for me. Our intentions are what establishes our ever-complex network of mental models. Put differently, without us having preferences to some outcomes and not others, there is no need for mental modeling.

There’s probably another force at play. Intention could also be our models influencing us. When we construct the model, we have two ways to interpret prediction errors. One is to treat them as information to incorporate into the model. Another is to treat them as a manifestation of a problem, a misalignment between the environment (mistaking the environment for our model of it) and our intention. Can we reliably tell these apart? It is possible that many of our intentions are just our unwillingness to incorporate the prediction error.

Finally, the “incomplete model” bit earlier is a hint that understanding is a paradox. The pervasive interconnectedness that we encounter in trying to understand the world around us reveals that there’s rarely such a thing as a single phenomenon of which to construct a mental model. If we attempt to model the whole thing, we run into the situation that I call the Sagan’s Pie: “If you wish to make an apple pie from scratch, you must first invent the universe.” A recognition that we ourselves are part of this universe flings us toward the asymptote of understanding. So, our ability to understand some things depends on us choosing not to understand other things, by drawing distinctions and breaking phenomena down into parts of the whole — and intentionally remaining ignorant of some. We strive to understand by choosing not to.

To clear the fog of philosophy somewhat, let’s distill this all into a couple of takeaways that we’ll stash for later use in this adventure:

- Understanding is iterative mental modeling, informed by prediction errors

- Problem understanding is about modeling ourselves in relation to the problem

- Problem understanding is also — and perhaps more so — about what we choose not to understand.

- A Solution

If we are looking at a problem, and as we learned earlier, our understanding of a problem is a model that includes us, our intention, and the phenomenon that is a subject of it, then a solution is the problem understanding-based prediction that resolves the problem’s intention, aligning the state of the phenomenon with it.

Because the problem’s model includes us, the solution often manifests as a set of actions we take. For example, for my trying to repel that mischievous bunny from the previous piece, one solution might look like the list of a) grab a tennis ball, b) aim at the tree nearby, c) throw the ball at the tree with the most force I can muster. However, solutions can also be devoid of our actions, like in that old adage: “if you ignore a problem long enough, it will go away on its own”.

Note that according to the definition above, a solution relies on the model, but is distinct from it. Same model might have multiple solutions. Additionally, a solution is distinct from the outcome. Since I defined it as a prediction, a solution is a peek into the future. And as such, it may or may not pan out. These distinctions give us just enough material to construct a simple framework to reason about solutions.

Let’s see… we have a model, a solution (aka prediction), and the outcome. All three are separate pieces, interlinked. Yay, time for another triangle! Let’s look at each edge of this triangle.

When we study the relationship between solution and outcome, we arrive at the concept of solution effectiveness, a sort of hit/miss scale for the solution. Solutions that result in our intended outcomes are effective. Solutions that don’t are less so. (As an aside, notice how the problem’s intention manifests in the word “intended”). Solution effectiveness appears to be fairly easy to measure. Just track the rate of prediction errors over time. The lower the rate, the more effective the solution is. We are blessed to be surrounded by a multitude of effective solutions. However, there are also solutions that fail, and to glimpse possible reasons why that might be happening, we need to look at the other sides of our triangle.

The edge that connects solution and model signifies the possibility that our mental model of the problem contains an effective solution, but we may have not found it yet. Some models are simple, producing very few possible solutions. Many are complicated labyrinths, requiring skill and patience to traverse. When we face a problem that does not yet have an effective solution, we tend to examine the full variety of possible solutions within the model: “What if I do this? What If we try that?” When we talk about “finding a solution,” we usually describe this process. To firm this notion up a bit, a model of the problem is diverse when it contains many possible solutions. Solution diversity tends to be only interesting when we are still looking to find one that’s more effective than what we currently have. Situations where the solution is elusive, yet the model’s solution diversity is low can be rather unfortunate – I need to find more options, yet the model doesn’t give me much to work with. In such cases, we tend to look for ways to enrich the model.

This is where the final side of the triangle comes in. This edge highlights the relationship between the model and the outcome. With highly effective solutions, this edge is pretty thin, maybe even appearing non-existent. Lack of prediction errors means that our model represents the phenomenon accurately enough. However, when the solution fails to produce the intended outcome, this edge comes to life: prediction errors flood in as input for updating the model. If we treat every failure to attain the intended outcome as an opportunity to learn more about the phenomenon, our model becomes more nuanced, and subsequently, increases its solution diversity – which in turn lets us find an effective solution, completing the cycle. This edge of the triangle represents the state of flux within the model: how often and how drastically is the model being updated in response to the stream of solutions that failed? By calling it “flux”, I wanted to emphasize the updates that lead to “interesting” changes in the model: lack of prediction error is also a model update, but it’s not going to increase its diversity. However, outcomes that leave us stunned and unsure of what the heck is going on are far more interesting.

Wait. Did I just reinvent the OODA loop? Kind of, but not exactly. Don’t get me wrong, I love the Mad Colonel’s lens, but this one feels a bit different. Instead of enumerating the phases of the familiar circular solution-finding process, our framework highlights its components, the relationships between them and their attributes. And my hope is that this shift will bring new insights about problems, solutions, and us in their midst.

- Life of a solution

Looking at the framework in the previous piece, I am noticing that the components of the tripartite loop (aka the solution loop, apologies for naming it earlier) form an interesting causal relationship. Check it out. Imagine that for every problem, there’s this process of understanding, or a repeated cycling through the loop. As this cycling goes on, the causality manifests itself.

Rising flux leads to rising solution diversity. This makes sense, right? More interesting updates to the model will provide a larger space for possible predictions. Rising solution diversity leads to rising effectiveness, since more predictions create more opportunities for finding a solution that results in the intended outcome. Finally, rising effectiveness leads to falling flux — the more effective the solution, the fewer interesting updates to the model we are likely to see. Once flux subsides past a certain point, we attest that the process of problem understanding has run its course. We now have a model of the phenomenon, ourselves, and our intention that is sufficiently representative to generate a reliably effective solution. We understood the problem.

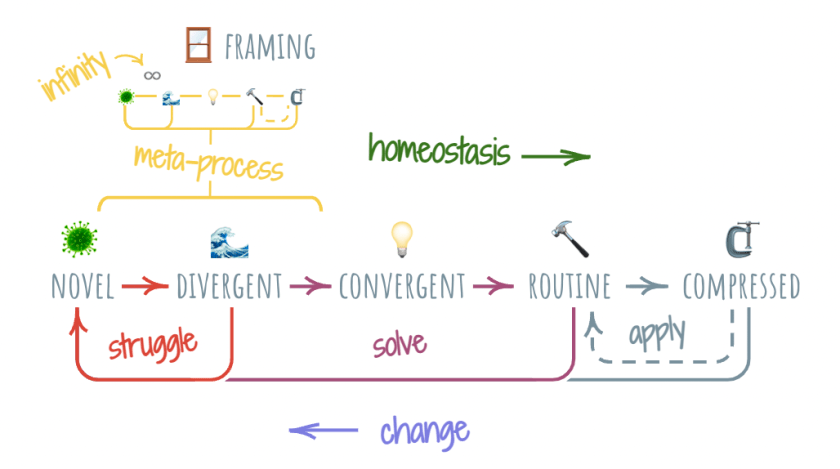

I am realizing that I can capture this progression in roughly four stages. At the first stage, the effectiveness is low and diversity is low, with flux rapidly rising. This is the typical “oh crap” moment we all experience when experiencing a novel phenomenon that is misaligned with our intention. Let’s call this stage “novel,” and assign it the oh-so-appropriate virus emoji.

Rising flux pushes us forward to the next stage that I will call “divergent”. Here, our model of the problem is growing in complexity, incorporating the various updates brought in by flux. This stage is less chaotic than the one before, but it’s usually even more uncomfortable. We are putting in a lot of effort, but the mental models remain squishy and there are few well-known facts. Nearing the end of the stage, there’s a sense of cautious excitement in the air. While the effectiveness of our solutions is still pretty low, we are starting to see a bit of a lift: all of that model enrichment is beginning to produce intended outcomes. Soon after, the next stage kicks in.

The convergent stage sees continued, steady rise of effectiveness. Correspondingly, flux starts to ease off, indicating that we have the model figured out, and now we’re just looking for the most effective solution. This stage feels great for us engineering folks. Constraints appear to have settled in their final resting places. We just need to figure out the right path through the labyrinth. Or the right pieces of the puzzle. Or the right algorithm. We’ve got it.

After a bit more cycling of the loop, we finally arrive at the routine stage, the much desired steady state of understanding the problem well enough for it to become routine, where solving a problem is more of a habit rather than a bout of strenuous mental gymnastics. The problem has become boring.

The progression from novel to routine is something that every problem strives to go through. Sometimes it plays out in seconds. Sometimes it takes much longer. However, my guess is that this process isn’t something that we can avoid when presented with problems. It appears to be a general sequence that falls out of how our minds work. I want to call the pressure that animates this sequence the force of homeostasis. This force propels us inexorably toward the “routine” stage of the process, where the ongoing investment of effort is at its lowest value. Our bodies and our minds are constantly seeking to reach that state of homeostasis as quickly as possible, and this search is what powers this progression.

- Change

So far, I carefully avoided the topic of change, presenting my problem-solving realm in a delightfully modernist manner. “See phenomenon? Make a model of it! Bam! Now we’re cooking with gas.”

Alas, despite its wholesome appeal, this picture is incomplete. Change is ever-present. As the movie title says, everything, everywhere, all at once – is changing, always. Some things change incomprehensibly quickly and some change so slowly that we don’t even notice the change. At least, at first. And this ever-changing nature of the environment around us presents itself as its own kind of force.

While the force of homeostasis is pushing us toward routine, the force of change is constantly trying to upend it. As a result of these forces dancing around each other, our problems tend to walk the awkward gait of punctuated equilibrium: an effective solution appears to have settled down, then after a while, a change unmoors it and the understanding process repeats. The punctuated equilibrium pattern appears practically everywhere, indicating that this might be another general pattern that falls out of the underlying processes of mental modeling.

Throughout this repeating sequence, the flux and effectiveness components wobble up and down, just like we expect them to. However, something interesting happens with the model diversity: it continues to grow in a stair-step pattern.

If you’ve read my stories before, you may recognize the familiar stair-step shape from my ongoing fascination, the adult development theory (ADT). It seems to rhyme, doesn’t it? I wonder if the theory itself is a story that is imposed upon a larger, much more fractally manifesting process of mental modeling. The ADT stages might be a just slice of it, discerned by a couple of very wise folks and put into a captivating narrative.

Every revolution of the process of understanding adds to our model, making us more capable of facing the next round of change. Sometimes this process is just refining the model. Sometimes it’s a transformational reorganization of it. This is how we learn.

Moreover, this might be how we are. This story of learning is such a part of our being that it is deeply embedded into culture and even has a name: the hero’s journey. The call to the adventure, the reluctance, the tribulations, and facing the demons to finally reveal the boon and bring it back to my people is a deeply emotional description of the process of understanding. And often, it has the wishful “happily ever after” bookend — because this would be the last change ever, right? It’s another paradox. It seems that we know full well that change is ever-present, yet we yearn for stability.

For me, this rhymes with the notion of Damasio’s homeostasis. Unlike the common belief that homeostasis is about equilibrium, in Strange order of Things, he talks how, from our perspective, homeostasis is indeed about reaching a stable state… and then leaning a bit forward to ensure flourishing. It’s like our embodied intuition accepts the notion of change and prepares us for it, despite our minds continuing to weave stories of eternal bliss.

- Model compression

At the end of each journey in our process of understanding, we have an effective solution to the problem we were presented with. Here’s an interesting thing I am noticing. We still have a diverse, deeply nuanced mental model of the problem that we developed by cycling through the solution loop. However, we don’t actually need the full diversity of the model at this point. We found the one solution that we actually need when approaching the given problem.

This is a pivotal point at which our solution becomes shareable. To help others solve similar problems, we don’t need to bestow the full burden of our trials and errors upon them. We can just share that one effective solution. In doing so, we compress the model, providing only a shallow representation of it that covers just enough to describe the solution.

This trick of model compression seems simple, but it ends up being nothing short of astounding. Let’s start with an example of simple advice, like that time when an expert showed me how to properly crack an egg and I almost literally felt the light bulb go off in my head. It would have taken me a lot of cycling through the solution loop to get anywhere close to that technique. Thanks to the compressed model transfer, I was able to bypass all of that trial and error.

Next, I invite you to direct your attention to the wonder of a modern toothbrush. Immeasurable amounts of separate solution loop iterations went into finding the right shape and materials to offer this compressed model of dental hygiene. To keep my teeth healthy, I don’t have to know any of that. I only need to have a highly compressed model: how to work the toothbrush. This ability to compound is what makes model compression so phenomenally important.

We live in a technological world. We are surrounded by highly compressed mental models that are themselves composed of other highly compressed models, recursing on and on. I am typing this little article on a computer, and if I stop to imagine an uncompressed mental model of this one device, from raw materials scattered unfound across the planet to the cursor blinking back at me, my mind boggles in awe. To type, I don’t have to know any of that. Despite us taking it for granted, our capacity to compress and share models might just be the single most important gift that humanity was given – aside from being able to construct these models, of course.

Model compression introduces a peculiar extra stage to the process of understanding. At this fifth stage, our solution effectiveness is high, flux is low, but our model diversity is low as well. When we acquire a compressed model – whether through technology or a story – we don’t inherit the rich diversity of the model. We don’t get the full experiential process of constructing it. We just get the most effective solution.

It feels like a reasonable deal, yet there is a catch. As we’ve learned earlier, things change.

When my solution is at this newly discovered “compressed” stage, a new change will expose this stage’s brittleness: I don’t have the diversity of the model necessary to continue climbing the stair steps of understanding. Instead, it appears that I need to start problem-solving from scratch. This does make intuitive sense, and the compressed model compounding makes this even more apparent. When a modern phone suddenly stops working, we have only a couple of different things we can try to resuscitate: plug in the charger and/or maybe try to hold down the power button and hope it comes back. If it doesn’t, the vastness of crystallized model compression makes it as good as a pebble. Chuck it into a drawer or into a lake – not much else can happen here.

Lucky for us, this phenomenon of compressed models being brittle in the face of change is a problem in itself – which means that we can aim our solving ability at it. If we’re really honest about it, software engineering is not really about writing software. It’s about writing software that breaks less often and when it does, it does so in graceful ways. So we’ve come with a neat escape route out of this particular predicament. If my toothbrush breaks or wears out, I just replace it with a new one from the five-pack in which they usually come. If my laptop stops working, I take it to a “genius” to have it fixed. Warranties, redundancies, and repair facilities – all of these solutions rely on the presence of someone else possessing – and maintaining! – their diversity of the mental model for me to lean on.

This shortcut works great in so many cases that I probably need to draw a special arrow on our newly updated diagram of the process of understanding. There are two distinct cycles that emerge: the already-established cycle of learning, and the applying cycle, where I can only use compressed models obtained through learning – even if I didn’t do the learning myself! Both are available to us, but the applying cycle feels much more (like orders of magnitude) economical to our force of homeostasis. As a result, we constantly experience the gravitational pull toward this cycle.

- Model compression and us

Often, it almost seems like if we run the process of understanding long enough, we could just stay in the applying cycle and not have to worry about learning ever again. Sure, there’s change. But if we study the nature of change, maybe we can find the underlying causes of it and incorporate it into our models – thus harnessing the change itself? It seems that the premise of modernism was rooted in this idea.

If we imagine that learning is the process of excavating a resource of understanding, we can convince ourselves that this resource is finite. From there, we can start imagining that all we have to do is – simply – run everything through the process of understanding and arrive at the magnificent state where learning is more or less optional. History has been rather unkind to these notions, but they continue to hold great appeal, especially among us technologists.

Alas, combining technology and a large-enough number of people, it seems that we unavoidably grow our dependence on the applying cycle. In organizations where only compressed models are shared, change becomes more difficult. There’s not enough mental model diversity within the ranks to continue the cycle of understanding. If such organizations don’t pay attention to attrition of its veterans, the ones who knew how things worked and why, they find themselves in the Chesterton’s fence junkyard. At that point, their only options are to anxiously continue holding on to truisms they no longer comprehend or to plunge back to the bottom of the stairs and re-learn, generating the necessary mental model diversity by grinding through the solution loop cycle, all over again.

I wonder if the nadir of the hero’s journey is marked by suffering in part because the hero discovers first-hand the brittleness of model compression. Change is much more painful when most of our models are compressed.

At a larger scale, societies first endure horrific experiences and acquire embodied awareness of social pathologies, then lose that knowledge through compression as it is passed along to younger generations. Deeply meaningful concepts become monochrome caricatures, thus setting up the next generation to repeat mistakes of their ancestors. More often than not, the caricatures themselves become part of the same pathology that their uncompressed models were learned to prevent.

In a highly compressed environment, we often experience the process of understanding in reverse. Instead of starting with learning and then moving onto applying, we start with the application of someone else’s compressed models and only then – optionally – move on to learning them. Today, a child is likely to first use a computer and then understand how it works, more than likely never fully grasping the full extent of the mental model that goes into creating one. Our life can feel like an exploration of a vast universe of existing compressed models with a faint hope of sometimes ever fully understanding them.

From this vantage point, we can even get disoriented and assume that this is all there is, that everything has already been discovered. We are just here to find it, dust it off, and apply it. No wonder the “Older is Better” trope is so resonant and prominent in fiction. You can see how this feeds back into the “excavating knowledge as a finite resource” idea, reinforcing the pattern.

In this way, a pervasive model compression appears pretty trappy. Paradoxically, the brittle nature of highly compressed environments makes them less stable. The very quest to conquer change results in more – and more dramatic – change. To thrive in these environments, we must put conscious effort to mitigate the nature of the compression’s trap. We are called to strive to deepen our diversity of mental models and let go of the scaffolding provided by the compressed models of others.

- Touching infinity

As we explore the process of understanding, it may not be immediately obvious why change isn’t conquerable, and why isn’t knowledge a finite resource as the siren of modernism sweetly suggests. As far as infinity goes, there are infinite stories to convey it, and here’s but one of them. It’s an examination of a particularly interesting kind of change: reciprocal adaptation.

Adaptation is all around us, and is largely responsible for the never-ending change. For example, when I rest on a tree stump in the forest after a long hike, I may notice a fragrant flower bush abuzz with the bees. I am seeing the effects of adaptation. Over the eons, flowers adapted to attract bees to solve their problem of pollination (my sincere apologies to passerby biology experts – I know too little of the subject to speak so confidently about it).

However, if I notice large yellow eyes examining me through the forest’s canopy, I would be experiencing another kind of adaptation. The predator is trying to build their own mental model of me. At that moment, I am its problem: the current nature-enjoying me as “what is” and the meal version of me that “ought to be”. Obviously, this makes the predator’s intent a problem for me – and thus engages me in reciprocal adaptation.

In a non-reciprocal adaptation, our understanding of the problem must include some hypotheses on how the phenomenon’s behavior changes over time. Even though it is already a pretty challenging task, we can choose to be careful, neutral observers of the phenomenon. With such commitment, we still have a chance at arriving at the model that produces an effective solution. For this kind of adaptation, the process of understanding looks like the one I described earlier.

Once we find ourselves in a reciprocal adaptation, things get rather hairy. Two or more entities see each other as problems – or at least, as parts of them. Each continuously develops a mental model of the problem that includes itself, the other, and their intention. In such situations, we are no longer neutral observers: every solution we try is used by other parties to adjust their mental models, thus invalidating the models of theirs we keep developing.

A pernicious fractal weirdness emerges. When you and I are locked in reciprocal adaptation, your intention is my problem, which means that my model of the problem now has to include your intention. Because I am part of your “what ought to be”, a mental model of me — how you model me — is now embedded in my model of you. In other words, not only do I need to model you, I also need to model how you model me. To produce an effective model, I also need to model how you model my modeling of you, and so on. And you have little choice but to do the same.

In this hall of mirrors, despite all parties acquiring more and more diverse models, we are not reaching that satisfying solution effectiveness found in other situations. Every interaction between us rejiggers the nested dolls of our mental models, and so the process of understanding looks bizarre, with effectiveness wobbling unsteadily or hitting invisible asymptotes. The “convergent” stage keeps getting subverted back into “novel”, and the “routine” stage of the process of understanding no longer develops. Correspondingly, the effort is pegged at maximum and while our valence of feelings about the situation remains negative.

This under-developed learning cycle is something that happens with us anytime we touch infinity. We struggle and we feel out of our depth. To illustrate this in our ever-growing process diagram, we’ll add an extra short-circuit from “convergent” back to “novel” stages, splitting the “learn” cycle into two. We’ll name the outer part of it the “solve” cycle, since it does culminate in arriving at an effective solution.

Let’s call the shorter circuit the “struggle” cycle. I picked this name because inhabiting this cycle is stressful and unpleasant – the effort remains at maximum for prolonged periods of time, exhausting us. The force of homeostasis tends to rather dislike these situations. It’s literally the opposite of the “apply” cycle – lots of energy goes into it. A good marker of touching infinity is that sense of rising unease, progressing toward a full-blown terror. My guess is that this is our embodied, honed by the evolution warning mechanism to steer clear of it.

When we’re in the “struggle” cycle, we gain one additional problem. You know, like it wasn’t enough to struggle with infinity, right? This additional problem stems from our intention to exit this cycle as quickly as possible. We even come pre-wired with a few solutions to break out of this cycle: fight, flight, and freeze. As an aside, I described this same phenomenon differently in “Model flattening” a while back, but hey — infinity and its infinite stories. These built-in solutions are what helped our cave-dwelling ancestors survive and we’re grateful for their contribution to humanity’s progress. However, they tend to work out rather poorly in somewhat more nuanced situations we experience in the present day.

To end things on a more positive note… I kept describing reciprocal adaptation in almost exclusively adversarial terms. And there’s something to it. When we are part of someone else’s problem, it’s a decent chance we will feel at least a little bit threatened by that. However, I would be remiss not to mention the more sunny side of reciprocal adaptation: mutuality. Mutuality is a kind of reciprocal adaptation in which our intentions are aligned. We have the same “ought to be”. As you probably know, mutuality produces nearly opposite results. We no longer need to build a separate mental model of our partner in reciprocal adaptation. We can substitute it with ours. This substitution pattern scales, too! If I can reliably assume that a given number of people is “like me,” (that is, has the same mental model as me), it feels like I gain superpowers. When we put our efforts to solve a common problem together, we can move mountains. Perhaps completely without merit, even infinity appears less infinite when we are surrounded by those who share our intention.

- Framing

When encountering an infinity-problem, we may have enough wherewithal to resist the urge to act on our caveman firmware. In such cases, we tend to employ a more sophisticated process to exit the “struggle” cycle. The typical name it goes by is framing, or discerning a subset of the infinity-problem that is approximately the same, but does not touch infinity. Framing is a bit of a cop out, a giving-up of sorts. It’s an admission that understanding infinity remains elusive. Framing is our way to convert a problem from the one we cannot solve to the one we can.

We perform this conversion by constraining the original problem. One very common technique for adding constraints is imposing a terminating condition. If we examine our instinctive “fight” response, we can spot a terminating condition: elimination of one of the participants. When we choose to fight, we convert a likely infinity-problem into a problem of winning. Shifting to this constrained problem still requires a bout of adversarial reciprocal adaptation, but only enough to reach the terminating condition.

Another way we constraint is by removing change from parts of the problem. Assuming things being constant feels so natural to us that we don’t even recognize it as the process of imposing constraints. Terminating conditions and removing change interlink with each other: of course the problem will go away permanently as soon as we win.

Yet another way to constrain infinity-problems is by drawing bounds. It just feels right when we put limits into what is possible and what is not. Yes, it is possible that I will get hit by an asteroid right now, but it is so unlikely that I would prefer not to consider that. Yes, it is possible that a deadly virus will cause a global pandemic, but it is so unlikely … waaaaait a minute. Human-erected bounds are all around us, and again, they combine with terminating conditions and presuming lack of change to create an environment that feels predictable. Games are a great illustration of such environments. From chess to Minecraft, games create spaces where the contact with infinity is microdosed to actually become fun.

When we frame a problem by imposition of constraints, we make a choice. We choose to ignore the parts of the problem that lie outside of the constraints. Once framed, these parts become the dark matter of the problem. Whether we want them or not, they continue to exist. Their existence manifests through a phenomenon we call “side effects.” By definition, every framing will have them. Some framings have more side effects, and others less. For example, if you and I are in a high-stakes meeting, and you say something that I disagree with, I might instinctively choose the “fight” framing and attempt to engage in fisticuffs right there and then. Conversely, I might choose to invest a few extra moments to consider the infinity-problem I am facing, and instead decide to examine how your statements might enrich my understanding of the situation. It’s pretty clear from these two contrasting approaches that one framing will have more negative side effects than the other (it’s the first one, if you’re still wondering). We often use the word “reframing” as the name for this seeking of a more effective framing.

So it seems that we’re better off when we view framing as a deliberate process. In relation to the process of understanding, it’s a meta-process: framing defines how we proceed with our understanding. Framings are squishy and vague early on, and solidify rapidly as the process goes on. By the time we reach the “solving” stage, framings serve as foundations we build our understanding upon. To emphasize this meta-ness of framing, I will further complicate our process diagram and embed a fractal copy of it (yay, infinity!) somewhere between the “struggle” and “solve” cycles. In this way, we perceive framing as its own process of understanding, with its own “novel”, “diverge”, “converge”, and “routine” phases. And yes, I will blissfully ignore the notion of this meta-process also having its own meta-process for now. (Pop quiz: which constraining technique did I apply just now?) However, Anne Starr and Bill Torbert have an insightful exploration of that particular rabbit hole in Timely and Transforming Leadership Inquiry and Action: Toward Triple-loop Awareness, connecting awareness of this fractality of meta-processes with – what else? – Adult Development Theory. The main distinction from the larger process is that for the framing process, solution effectiveness measures the degree of side effects of the framing.

Recognizing when framing is happening and consciously shifting to this separate framing process is likely one of the most important skills one can develop. We come in contact with infinity every day. Every heated exchange with a loved one, every swing of the unseen polarity, every iron triangle (like the project management one) is us becoming aware of the infinity’s touch. A picture that comes to mind is that of a three-layered world, where the top is filled with the routine of compressed models we take entirely for granted, supported by the middle layer of framings that we’re still puzzling out. At the bottom of this world are the Lovecraftian horrors of infinity that churn endlessly, occasionally shaking the foundation of our process of understanding and waking us up to the possibility that every framing is just a story we tell ourselves to avoid staring into the infinity’s abyss. Those capable of diving into that abyss and enduring it long enough to gain a glimpse of a new framing are the ones who enable others to build worlds upon it.

- The problem understanding framework

With my apologies for taking a scenic route and sincere thanks for following along, I am happy to declare that we now have all the parts to return to that framework I started with. To give you a quick recap, the framework was my replacement for Cynefin and consisted of three problem classes: solved, solvable, and unsolvable.

And now, for the big reveal. Allow me to connect the problem classes to the cycles in the process of understanding. The “solved” problem class corresponds to the “apply” cycle, the “solvable” problem – to the “solve” cycle, and finally the “unsolvable” problem fits the “struggle” cycle. We apply solved problems, we solve solvable problems, and we struggle with unsolvable problems. Okay, maybe the reveal wasn’t as dramatic as I made it out to be.

I still don’t have a catchy name for it. Right now, I am going with a generic “problem understanding framework”, which is definitely not as cool as Cynefin or OODA.

When starting on this adventure, I wanted to construct a framework that had a few of attributes that seemed important: ontological humility, modularity, and layering.

For me, the attribute of ontological humility meant that the framework must be rooted in the idea of constructed reality. Every problem is probably unsolvable. However, it might come with a really solid framing that makes it fit reasonably well into a solvable problem class. It might even come with a highly effective solution that elevates it into the class of solved problems. The problem’s current position within a class might shift, as our explorations of change indicate. The framework itself is just a framing and as such, has blindspots and infinity-problems within it. We can see it as a bug, or just be humble enough to admit that the world around us is much more complex than any framework can capture.

When I say “modularity”, I convey possibility and encouragement to use and remix parts of the framework like LEGO bricks to fit a particular experience or challenge. You don’t need the whole thing. I also want to point out that the framework provides for reinterpretation and swapping out of its parts. If you have your own way to think about infinity-problems, please do replace the pre-built bits with it. Think of it as a bunch of micro-frameworks and mental models chilling contentedly in one happy house. The whole thing hangs together, but also works as individual pieces.

The third property of layering provides a progression from more pragmatic, surface usage to more in-depth and rigorous one. The problem classes are already useful to orient – and it’s okay if this is the only layer that you need in a given situation. But if you want to dig deeper, I tried to layer concepts in a way that allows gradual exploration. There is a rigorous foundation under the three simple buckets. Each layer answers a different question, starting with a simple “where am I” at the top layer, and progressing toward the forces that might be influencing me, their underlying dynamics, and why these dynamics emerge.

To give you a sense of how it’s all organized in my mind, I thought I’d put it all together in one mega-diagram.

The layers are at the top, arranged (left-to-right) from more concrete to more rigorous: starting with the pragmatic three problem classes, progressing to the process of understanding, then arriving at the learning loop, and finally revealing the predictive model fundamentals. The modules are at the bottom, placed along the spectrum of the models. Not gonna lie, it looks a bit daunting.

So wish me luck. Next, I’ll be playing with this framework and applying it in various situations. Let’s see where the process of understanding takes me. And of course, I’ll keep sharing any new learnings here.

- Limits

This story builds on the one I wrote a while ago, and adds one more leg for this stool. This third leg came as a result of examining the solution loop with the question of “What are the limits to finding a solution?”

This question has been on my mind ever since I wrote about infinity. Infinity and something very large, yet finite can be very hard to tell apart. I needed a way to make sense of that, so this additional module for problem understanding framework was born.

Looking at the edges of the loop one by one, I can see that our mental capacity is the limit for the number of possible solutions. In other words, the diversity of our mental models is limited by our capacity to hold them. The example of trying to explain calculus to a three-year old or adding yet another project to the overworked leader’s plate still works quite well here.

The limit of attachment becomes evident when we look at the rate of interesting updates to the model (aka flux). I will define attachment as our resistance to incorporate model updates. This one is a bit more tricky. When we’ve developed a model that works reasonably well, we start exerting effort to reduce outlier updates to the model to preserve the model’s stability. Often, we apply a comforting word like “noise” to these outlier signals and learn to filter them out. It is not a surprise that in doing so, we develop blindspots: places where the real signal is coming in only to be discarded as “noise”.

Limit of attachment naturally develops from having an intention. The strength of our intention influences how firmly we want to hold the “what should be” model. Some leaders have such strength of intention that it creates “reality distortion fields” around them, attracting devout followers. This can work quite well if the leader’s model of environment doesn’t need significant adjustments. However, high intention strength hides the limit of attachment. The mental model remains constant and the growing disconfirming evidence is ignored until it is too late.

The third limit is obvious and I am surprised I haven’t noticed it in retrospect. The edge between solution and outcome (what I called effectiveness) is limited by time. To understand how effective my solution is, I must invest some time to apply it and observe the outcome.

These three limits — capacity, attachment, and time – appear to interact with infinity in fascinating ways. When we say that the adversaries are evenly matched, we implicitly state that their limits are nearly the same. In such cases, the infinity asserts itself. While limits play a role, it is the drowning in recursive mental models that never reach a stable state that takes the center stage.

However, adversarial adaptation is no longer an infinity-problem if your capacity is significantly higher than mine. You can easily outwit me. Similarly, if you are able to let go of your old models with less fuss than I, you are bound to outmaneuver me. Finally, if you are just plain faster than me, you can outrun me. For you, it’s a solvable problem. I, on the other hand, will still be in the midst of an unsolvable problem.

Maybe this is why superior speed, smarts, and agility are much sought-after traits in conflicts. As an aside, capacity advantage seems to come in two forms in adversarial adaptation: both being smarter and just being more numerous. Both require the opponent to have significant mental model diversity, which pushes them against the wall of their limits. This quantity trick is something that we’ve all observed with insects. A couple of ants in the house is not a big deal, but once you see a tiny rivulet of them streaming out of a crack in the kitchen window, the problem class swings toward unsolvable.

Similarly, the presence of limits can give us an impression of facing an infinity-problem when the problem is indeed solvable, but beyond our limits to reach an effective solution. In the organization that is caught in the “reality distortion field” of their leader, continuing to push forward might seem like fighting an invisible foe (which is a marker of perceiving an adversarial adaptation), but in reality be a matter of hitting the limit of attachment. In such situations, the outside observers might classify the problem as solvable, but from inside, it will come across as unsolvable.

Put differently, limits create even more opportunities for problem class confusion. We may mischaracterize unsolvable problems as solvable – and then be surprised when the infinity shows up. We may mischaracterize solvable problems as unsolvable – and fight impossible beasts to exhaustion.

Ronald Heifetz and Marty Linsky have this lens of technical and adaptive challenges. To describe the distinction in terms of the problem classes, technical challenges would belong in the class of solvable problems, and adaptive challenges would situate in the unsolvable problem class. One of the key things the authors emphasize is how often the confusion of one kind of challenge with another is at the core of all leadership problems. It is my hunch that the interplay of infinity-problems and limits has a lot to do with why that happens.

Oh! Also. While you weren’t looking, I re-derived the project management triangle. If we look at the capacity, attachment, and time, we can see that they match this triangle’s corners. Time is time, of course – as in “how much time do I have?” Capacity is cost, with the question of “how much of your capacity would you like to invest?” And last but not least, attachment is scope, with the respective “how attached are you to the outcomes you desire?” This is pretty cool, right?

- A vision and a hallucination

Talking with one of my colleagues, we found this simple lens. We both arrived independently at the idea that one of the strongest ways to instill coherence within an organization is aligning on some more or less unified intention. After all, organizations are problem-solving entities. And as follows from the framework I’ve been going on about, intention is the force that brings an organization together. Put differently, emergence of an organization is the effect of imposing an intention.

How might this intention be communicated? We picked a well-worn concept of the “compelling vision” to play with. The distinction that we’ve drawn is that some visions, when articulated, appear to enroll everyone to align with the intention they communicate. And some visions come across more like hallucinations: we hear them and may even be fascinated by them, but little alignment in intention materializes. My colleague used Yahoo’s “get its cool back” from a decade ago as an example of such a hallucination. Some good things did come out of that endeavor, so there’s likely a spectrum rather than a binary distinction.

So what makes one story a vision and the other a hallucination? I am sure there are many possible explanations. I, however, want to mess with the newly-derived limits framing to explore the question.

To be compelling, a vision must be posed as a solution. That is, a vision is a prediction that is based on an understanding of some problem. A resonant vision captures the full mental model of the problem: the “what is” and the “what ought to be”, as well as a plausible path to the latter. Thus, communicating a vision is an attempt to share the mental model.

It is in this process of communication that the vision’s fate is determined. We share mental models through stories. And when telling such a story, the one who communicates it must overcome all three limits to understanding these mental models — both their own and those of their recipients.

To overcome the limit of capacity, I need to ensure that the story matches the mental model diversity of those I am sharing it with. There is a distinct upper and lower bound. The mental model behind the story needs to be within the limit of tolerance: not too complex and not too simplistic. If I tell you that my vision is that “we must do good-er”, you may recognize that my mental model diversity is lower than yours, turning my vision into a hallucination. Conversely, if I write effusively and at length about animating forces, lenses, and tensions (as I regrettably do), the mental model will bounce off of you, suffering the same fate. The limit of capacity is about the balance of clarity and rigor.

The limit of time manifests as the plausibility of the vision. We notice this limit when we see the “5-year” or “10-year” qualifiers attached to vision docs. When I communicate the vision’s story, I must have a sense of when this vision will come true. On their part, the recipients of the story, once they acquire the mental model behind the vision, will intuit its feasibility. They may go “yeah, that feels right” or balk at the overly ambitious timelines. I once suggested at the leads offsite that a product that hasn’t even shipped will have one million users next year. My colleagues were nice to me, but I was clearly hallucinating. A good way to remember this limit is to imagine me painting pictures of some clearly impossible future and folks quietly rolling their eyes.

The final limit — the limit of attachment — is the trickiest. Suppose I’ve told the story clearly. You get exactly what I mean, and see the respectable depth of the mental model. You also see that my vision is plausible. Yay! We overcame the first two limits. But… is it where you want to go? Imagine that, in playing with this mental model, you recognize with dread that pursuing it would negatively impact your career or perhaps compensation — or both. Or you might see some effect on the environment or surrounding community that is in conflict with your principles. Does my story contain room for flexibility? And if not, how might you work around it? In communicating our vision, we encounter the limit of attachment as the resistance to change – and always, always, any alignment of intentions means change.

It is here where the visions most commonly transmute into hallucinations. No matter how well-articulated and rigorous, no matter how plausible, if we are firmly attached to our particular outcomes, we won’t be able to align our intentions toward some common goal. What’s worse, there is very little that I can say in my story to overcome this limit. The limit of attachment is a structural property of the organization.

Lisa Laskow Lahey and Robert Kegan called this limit the “Immunity to Change”, and it is my intuition that most organizations and leaders have only vague awareness of it. My guess is that the limit of time is the best-understood of the three, while the limit of capacity is the one which most strategy-minded folks get exhausted and burned out overcoming. The limit of attachment shows up spuriously in conversations here and there (usually characterized as “politics” and “shenanigans” or “this team getting mad at us”), remaining almost entirely submerged in the vast subconscious of the organization. It is the embodiment of thousands of stories told and retold within the organization, a zombie horde against which no single new story stands a chance.

- Intentionality and meaning

So far, I’ve been talking about intention as a fairly straightforward, singular thing. I have an intention, you have an intention, the bunny has the intention, and so on. As you probably suspected all along, this is at best a gross simplification of “spherical cow” proportions. I am pretty sure I can’t describe the full complexity of what’s actually happening. But here’s a story that tries.

We live in a world teeming with intentions. We are surrounded by them, we are in them, and they are within us. Intentions permeate us. Many (most?) of these intentions are not easily visible to us. When I was discussing mental models, I used this image of a massively multi-process computer, which might come in handy here. Imagine that our consciousness is a lone terminal connected to this computer. This terminal can only track a tiny fraction of the intentions at a time. Most of them exist in the background, without our awareness.

It would be nice if our intentions operated on some unified model of “what is” and “what ought to be”. But no, turns out that is way too much to ask of a good old human brain. Since mental models are diverse and inconsistent, they produce a dizzying array of intentions pointing in all different directions, creating tensions and friction amongst each other.

Sometimes we feel intense suffering of two internal intentions being at odds with one another — and it may take years to recognize that the conflict was entirely due to mental model inconsistency. We may even recognize with sadness that the intentions that caused us so much suffering were one and the same, just viewed through the lenses of two mutually inconsistent mental models. Worse yet, a particularly severe tension might trigger adversarial adaptation within ourselves, where two intentions form entire conflicting parts of us locked in a battle. Through this lens, bad habits and addiction are bits of the infinity-problem sprinkled onto us.

It’s like we are these cauldrons of intention stew, spiced with infinity. In this stew, intentionality is the practice of observing our own intentions, orienting them in relation to another, and deciding to act on some and not others. This description might trip something in your memory: these are the steps of the OODA loop, known also as the solution loop. And if there’s a solution loop, then there’s definitely a problem lurking about. What is this problem that the practice of intentionality aims to solve? Why would we want to understand our own intentions?

It is my guess that the problem behind the practice of intentionality is the problem of meaning. This is a big leap, and I am in a thoroughly uncertain territory here. I am definitely intimidated by the largesse of the topic I am gingerly stepping into. Yet, it seems useful to imagine that the more our internal intentions are aligned with one another, the more meaningful our lives feel to us. Conversely, when intentions within us are less aligned, we experience loss of meaning. Put differently, a sense of meaning in our lives is proportional to how well we can navigate the multitude of our internal intentions.

If we believe this, the crisis of meaning that many adults encounter in the second half of their lives might not be due to the lack of intentions, but rather due to their overabundance. If I lived long enough, I would have accumulated a great cache of mental models over the years. And if I didn’t practice intentionality, that would necessarily leave me with a boiling soup of intentions. The sense of being lost and without purpose emerges from every single intention seemingly conflicting with another, like a giant ball of spaghetti. What is up with all the food metaphors? I guess it’s spaghetti soup now.

Building on that, if I imagine an environment where compressed mental models are abundant and easily accessible, the crisis of meaning might be something that arrives much sooner than middle age – and becomes much more pervasive. Rapid acquisition of mental models without accompanying intentionality seems like a recipe for disaster. In the age where knowledge is so easily acquired, teaching intentionality becomes paramount.

If I click the zoom level up from individuals to organizations, I can see how the same applies to organizations. The challenges of coherence that manifest in large, mature organizations might be the result of an overabundance of individual intentions (teams, sub-teams, people, etc.) that do not add up to a single intention that brings the organization together. It is that intention that can only emerge through a rigorous practice of intentionality – both within the organization and individuals that comprise it. We can call this practice by many different names – be that self-reflection, mindfulness, or strategic thinking – but one thing seems fairly certain: without mastering it, we end up in a crisis of meaning.

- Stakes

This is not as well-formed as it could be, but felt worth capturing. I’ve been struggling to describe the limit of attachment in various ways, and the concept of “stakes” seems like such a good topic to explore.

There’s something about the phrase “a high-stakes situation” that paints a vivid picture of a stressful encounter. When stakes are high, we are alert, anxious, and ready to act. When stakes are low, we’re relaxed, chill, and maybe even bored. But what are these “stakes”? Where do they come from?

Trying to orient the concept within the problem understanding framework, I characterized stakes as the degree of our attachment to an intention. I am loading a few things into this burrito of a definition, so let me try to unroll it.

First, stakes are associated with an intention. In a world without intentions, there aren’t stakes. This seems important somehow, given that every intention represents a problem. Stakes are how we measure the significance of us finding a solution to this problem. It doesn’t matter if we find solutions to low-stakes problems. It matters a lot for the high-stakes problems.

Second, my use of the word “attachment” indicates a particular kind of limit of understanding being tested. The higher the stakes, the less likely we are to incorporate disconfirming evidence into our model and adjust it. When stakes are low, our limit of attachment is no longer impacting our process of understanding. For example, a brainstorming session or a generative meeting usually requires a low-stakes setting.

Why is it that we are attached more to some intentions and not others? Let me introduce a kind of intention that I’ve touched on briefly when discussing homeostasis: the intention to exist. The existential intention is something that comes built-in with our wetware. Even before we are capable of forming a coherent thought, we already somehow have the most primitive mental model that includes a “what is” with us in it, and “what should be” with us continuing to be in it. We are born into solving our existential problem.

My guess is that this existential intention is something that undergirds most of our intentions. Put differently, stakes rise when any of the mental models we contain start predicting outcomes with us not existing. Remember the predator wanting to eat me in one of the earlier posts? That’s a high-stakes situation. One of those “what should to be” outcomes had “me” replaced with “meal”.

Existential intentions have a firmly fixed “what should be” that is non-negotiable, which makes them a source of distortions to the rest of our mental models. Bumping into the limit of attachment will tend to do that. Especially in the early stages of our development, this can lead to our mental models getting to seriously weird states. A way to think of psychology might be as the entire discipline dedicated to untangling of the mental models tied in vicious loops of adversarial adaptation within one person’s mind.

Because of these distortions, existential intention often gets in the way of living joyfully. For example, whenever I feel nervous before speaking to a large audience, I am likely experiencing some entanglement with existential intention. Somewhere in the depth of my brain, there’s a mental model in my mind that is making a literal existential-scale prediction: that perhaps I may be mauled to death by the audience or some absurdity of the sort. Usually, the mental model takes several hoops before arriving at “and then I will die!” and may include classics like “everyone will laugh at me” and “this will be the end of my career” and “my family will disown me and kick me out to the street” so on. It may be only a teensy-weensy part of the overall mental model, a part that is easily overwhelmed by other, more mature and confident mental models. But the mere fact of me feeling nervous tells me that it’s there – freaking out and trying to avert my imminent demise.

Because our mental models are developed individually, we all have our own unique configurations of existential intention entanglements. Situations that are high-stakes for some may be totally chill for others. Though socialization brings a degree of sameness, our evaluation of stakes remains deeply personal. For example, when creating a low-stakes environment for a generative conversation, we may mistakenly presume that an environment works for us will for all of the participants. I’ve made this mistake a whole bunch of times. The best approach I know is to manage the stakes dynamically, staying aware of the participant’s engagement and helping them navigate their tangles of existential intentions.